Validating the foundations for AI in fracture care

Artificial intelligence (AI) is increasingly seen as a promising tool to support clinical decision‑making in trauma and orthopedic surgery. Before such tools can be used responsibly, however, they must be trained on imaging data that are not only large in volume, but also consistently and reliably annotated.

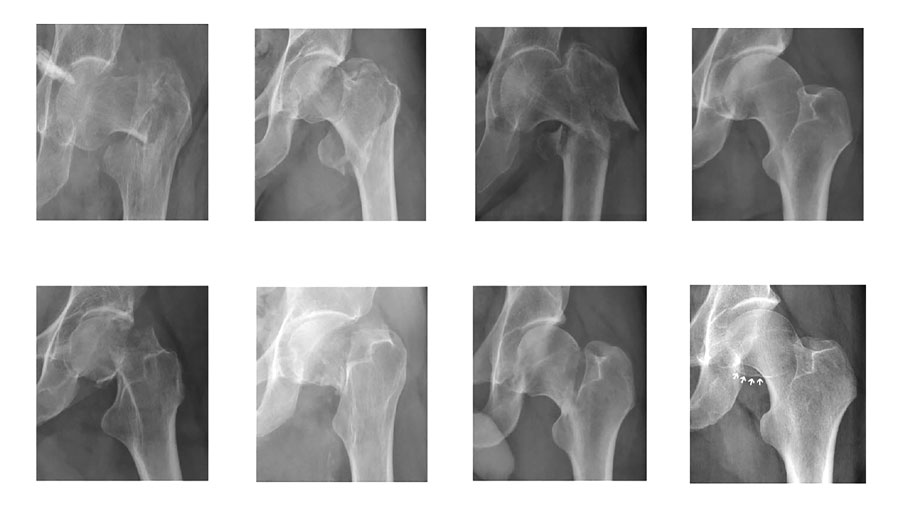

A recently completed collaboration between the AO Foundation and the University of Turin, published in Injury as a proof‑of‑concept (PoC) study and supported AO Innovation Funding, addresses this challenge by validating the “ground truth” of a large radiographic image database of proximal femur fractures.

The study assessed how consistently expert surgeons classify fractures on standard anteroposterior radiographs using the AO/OTA system, and whether these annotations are robust enough to support future AI development.

According to Tracy Zhu, project leader under the AO Innovation Translation Center’s (AO ITC’s) Medical Affairs and co‑author of the study, data quality remains the key bottleneck for AI in orthopedics: “As AI is increasingly revolutionizing orthopedic care, high-quality, diverse, clinically validated and curated imaging data has become the critical constraint for developing reliable diagnostic, surgical planning, and predictive AI tools.”

Strong agreement—within known limits

Two independent groups of expert surgeons—one from the AO Foundation and one from the University of Turin—reviewed and classified the same set of 300 radiographs. Overall agreement was high, confirming that expert‑based annotations can be reliable when a structured workflow is applied. Where disagreements occurred, they were not random but clustered around specific fracture boundaries, particularly between AO/OTA 31A1 and 31A2 fractures, and between “no fracture” and 31B fracture.

The findings also reinforce that fracture classification in imaging is not absolute. “Ground truth in imaging diagnostics of fractures will always be probabilistic and context dependent, not absolute,” Zhu notes. “Real-world images often deviate from idealized AI training assumptions. For AI models to be effective in practical scenarios, they must be robust to diverse and variable imaging data reflective of the actual clinical environment, and they must also mimic the real-world clinical output.”

Therefore, it is essential to reframe AI’s role from “automated classifier” to “support partner” in decision making. Like a human expert, an AI tool must be able to identify when the data itself is inadequate or flawed. Consequently, the AI tool must learn to flag uncertainty rather than enforce rigid classification.

Identifying areas of expert disagreement provides valuable guidance for how AI tools should be designed. In real‑world practice, surgeons frequently work with imperfect or ambiguous images that require further examination and additional images. Future AI systems must be able to reflect this reality and assist with triage.

Tracy Zhu, Project Leader AO ITC Medical Affairs

Enabling responsible innovation

From the AO ITC’s perspective, the study fits into its broader strategy of enabling responsible innovation. The AO Foundation does not aim to develop its own algorithms, but to provide a trusted foundation of high‑quality data that others can build upon.As Stefano Crespan, who leads the AO Innovation Funding’s Strategy Fund, explains: “Our next step will be a pilot to confirm whether the AO can establish a secure and trusted platform to access medical images vetted and annotated by AO experts, thereby accelerating innovation in musculoskeletal care.”

By rigorously testing expert agreement and clearly defining areas of uncertainty, the PoC study establishes an important foundation for future AI development in trauma and orthopedics. It demonstrates that trustworthy AI begins not with automation, but with validated clinical expertise—and with a clear understanding of both consensus and nuance in fracture interpretation.

Stefano Crespan, AO Innovation Funding

Why this matters

- More consistent fracture classification:

The study shows that expert-annotated radiographs can reach strong agreement, providing a reliable foundation for AI tools that support consistency across clinicians and centers.

- Clearer handling of borderline cases:

By identifying where expert disagreement clusters, the study highlights information, and the lack thereof, that must be built into decision support tools rather than obscured.

- AI that reflects real clinical practice:

Instead of forcing definitive answers, future AI systems trained on validated data can learn to flag uncertainty and inconclusive cases, mirroring how surgeons work with imperfect imaging in everyday practice.

- Reduced cognitive and workflow burden:

Validated AI-based radiograph analysis has the potential to assist with triage, prioritization, and standardized assessments, helping surgeons manage growing imaging volumes without replacing clinical responsibility.

You might also be interested in:

- “Ultimately, it’s the patient outcome that counts”

- New at the AO Davos Courses: The AO Innovation Hub

- Learn more about the AO’s three institutes: the AO Research Institute Davos, AO Innovation Translation Center, and AO Education Institute